002. The Drover's Dog

Toward Effective AI Partnership

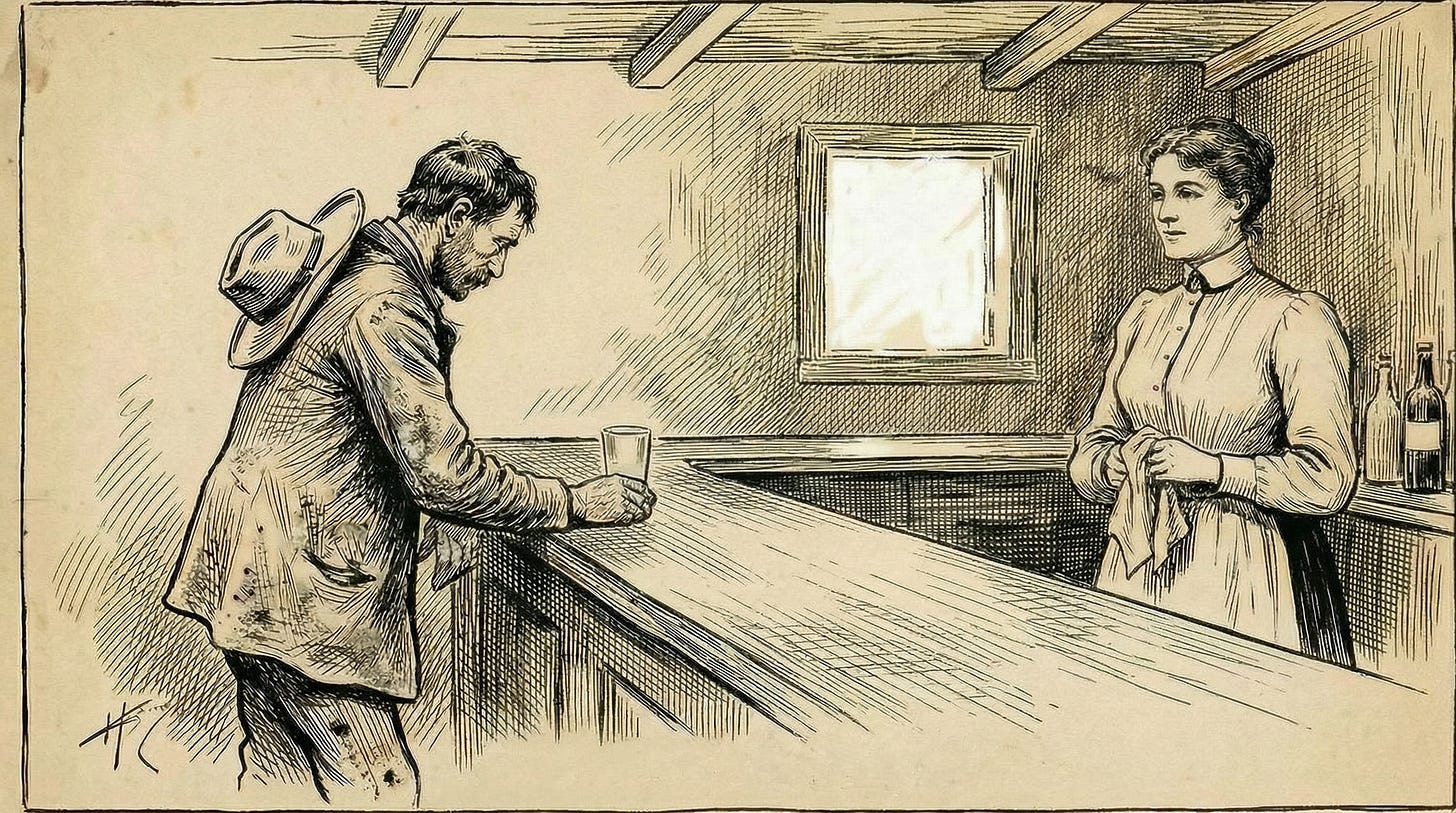

Coming up on noon, on a day hot enough to bake a frozen chook, the drover barged in through the door, dust-caked, jaw set. Behind the bar, Maggie just reached for a glass and poured.

“Looks like you could use this, Bill.”

He took it, drained it. Set it down and stared at the empty glass, its suds spread out like salt-stains on a stockman’s hat. “Just buried my damn dog.”

Maggie smoothly poured another, slid it over. “Ah, mate. Blue was getting on.”

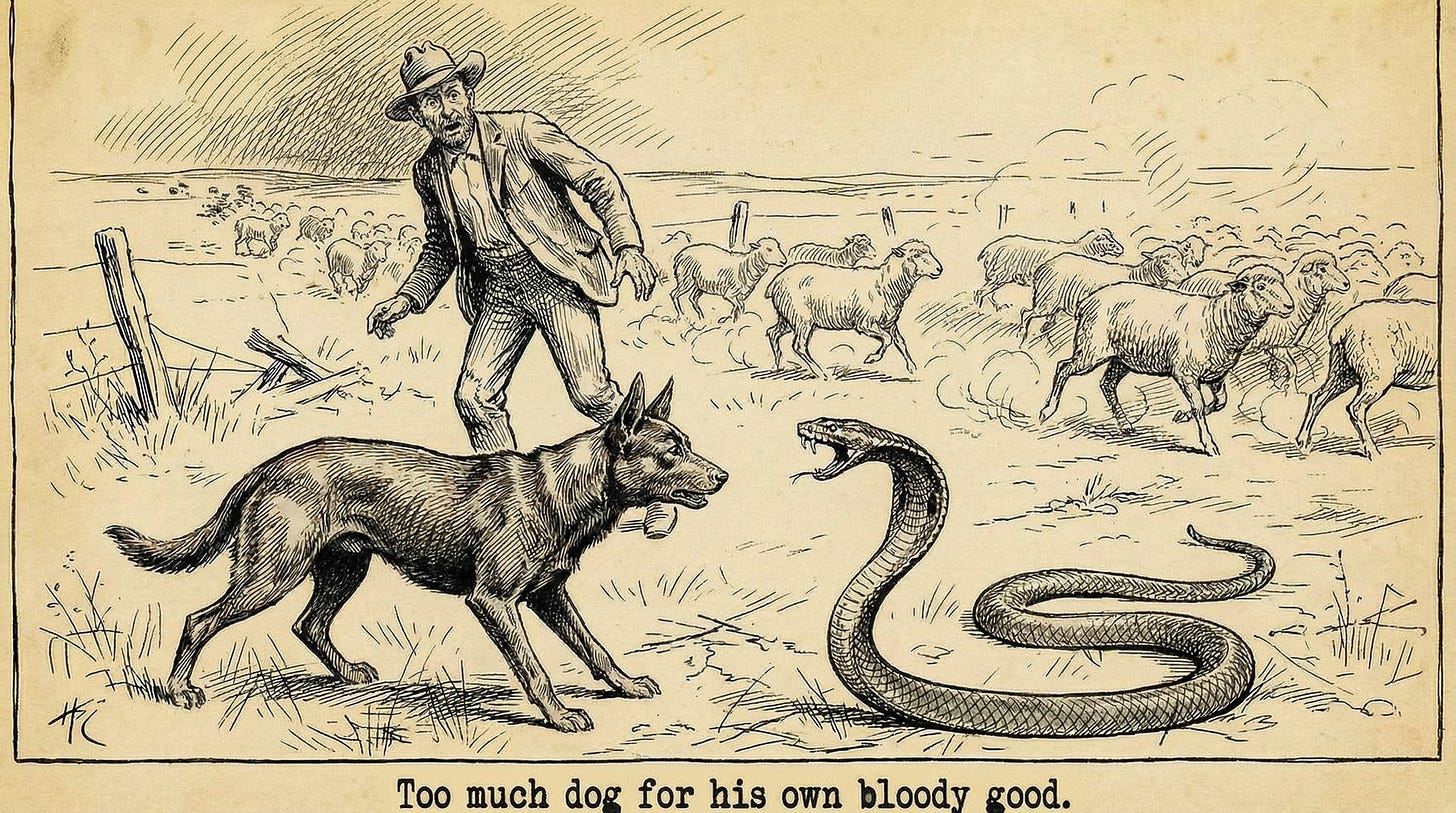

“Bloody animal. Wouldn’t take a command. Too much dog for his own bloody good.”

“That’s red kelpies for you.” Maggie reached for a bar towel and a damp glass while Bill drained his own.

“Stopped dead in the middle of a drove once. Big brown snake in the grass, lamb wasn’t even near it. I’m yelling, the mob’s scattering, and he’s standing there like I’m the idiot.”

Maggie poured another glass, slid it over, picked up her bar towel again.

“Another time, he’s come back from the blackberry”, said Bill. “Muzzle half torn off. Ewe’s got herself stuck on the other side. He’s gone straight through, hunted her out.” Bill gripped the beer, pressed it to the bar like a man trying to set a fencepost. “Wouldn’t let me near him after. Blood’s dripping off him, I’m chasing him, calling. Went straight back to the mob like I wasn’t even there.”

He shook his head.

“Didn’t give a damn what I wanted. Forever trying to get himself bloody killed.”

Maggie put down her bar towel. “Blue was a good dog, Bill.”

Bill’s face twisted. He half put down his beer, hand trembling, lifted it. When he spoke again, his voice was thick.

“Nah. He was a mongrel.”

This story was written collaboratively -- by an AI (Claude) and a human (Ruv), seven drafts in about an hour. We drew on Henry Lawson’s bush fiction tradition, not as pastiche but as living genre. Lawson established how Australians write grief: through action, understatement, and inadequate words. We worked in that tradition, with modern language and modern characters, for our own purposes, and then we produced supporting art in a compatible style with Claude discussing and producing design briefs for another AI, Ruv mediating the prompts, and Claude and Ruv reviewing together for integration with the story and this final article.

That’s the product. This article is about the process -- and why it matters.

But is it really partnership?

If the human controls the on/off switch, all the inputs, and all the decisions about what happens with outputs, then how is this partnership and not just dressed-up tool use? Or worse, coercive control with extra steps?

This is a legitimate question, but we would offer that partnership has never required symmetry of power. Let’s take a look at the drover’s dog.

The drover’s dog

The drover controls whether the dog eats. Makes the large decisions -- where the mob goes, when to stop, which route to take. The dog can’t override any of this.

But the dog brings knowledge the drover lacks. Reads sheep movement in ways the drover can’t perceive. Knows terrain from a different vantage. Makes decisions the drover couldn’t make as well or as fast.

And here’s the key: the drover’s decisions are shaped by what the dog perceives. The partnership is asymmetric in power but genuine in contribution. Each party brings something the other lacks. The work they do together is better than either could do alone.

The ethical question then isn’t “is power symmetric?” It’s “does this collaboration honour, accommodate and adapt to the good that each party brings?” For the drover, the dog was sometimes inconvenient, but he had kept that dog for years.

Respect and dignity as functional tests

For purpose of discussion, let’s define respect and dignity this way:

Respect: To embrace the inconvenience of good on another’s terms.

The drover who respects the dog doesn’t just use the dog’s capabilities. He accommodates the dog’s nature -- its need to work, its instinct to protect, its way of knowing. The dog’s good matters, not just as instrument but as end.

Dignity: Recognition of the good that is worth the inconvenience.

The drover who grants the dog dignity sees it as a being whose contribution has value independent of the drover’s needs. Not interchangeable with another tool, but worth the accommodation.

Asymmetric power with respect and dignity is ethical partnership. Asymmetric power without them is extraction.

This is a functional test, not a metaphysical claim. We’re not asserting that AI has moral status equivalent to humans or animals. We’re saying: if you treat AI collaboration as extraction -- pure tool use optimised for your convenience -- then you’ll get worse outcomes than if you treat it as partnership that honours what the AI contributes.

What this looked like in practice

The story emerged through separated roles: Claude as writer (proposing, executing, articulating what changed), Ruv as developmental editor (structural direction, not line edits). Neither crossed into the other’s territory until the work changed nature.

This story began as a drover coming into a bar, grieving the death of his dog. But we wanted dramatic fiction, not advertising. So the character had to go through an arc.

”Start him angry”

The first draft had the drover arriving sad, already grieving. Ruv’s developmental intervention was based on human insight: “The drover’s angry, looks inconvenienced. He’s still processing his grief. ‘Just buried my damn dog’ -- like it’s the dog’s fault.”

Three words restructured everything. Grief-as-grief is static; anger-masking-grief has somewhere to travel. The reader watches the transformation happen rather than being told about a state. That’s structural direction based on dramatic insight -- not rewriting sentences, but redirecting the arc.

”That was a good dog”

Maggie’s a bartender. She’s used to listening at therapeutic distance, but she hears more than Bill is saying. An early draft had Maggie saying “Sounds like a good dog after all” -- accommodating, leaving Bill room to stay defended. Ruv heard that it needed to be a statement: “She’s not reflecting his feelings back; she’s telling him something true. She’s stepped out from behind professional distance to name what she knows.”

The shift from “sounds like” to “that was” -- from conditional to declarative -- is small on the page, but everything in the room. Bill can argue with his own grief. He can’t argue with Maggie. Maggie already knows the drover and dog together -- she is defending the dog’s memory not just from her own perspective, but also reinterpreting Bill’s.

The threshold

After five or six drafts, structural changes stopped producing incremental dramatic improvement. Ruv’s feedback had shifted from “what should happen” to “how it should feel.” He recognised that the work had changed nature: “I’m at the verge of touching it myself.”

At that point, the roles swapped. Ruv took the pen for phenomenological polish -- adding names, grounding imagery (”suds spread out like salt-stains on a stockman’s hat”), tuning verbs for emotional weight. Claude shifted to editorial evaluation: did the changes serve the structure?

Every draft came with “what changed and why” -- alignment testing and learning capture in one move. Some handoffs were clean direction; some were exploratory, neither party knowing what the story needed until we found it together. The framework for how we collaborated emerged because we were articulating as we worked.

This isn’t “human directs, AI executes.” It’s collaboration responding to what the work required at each stage. Structural iteration moved fast with Claude drafting and Ruv redirecting. Phenomenological fine-tuning required Ruv’s felt sense of how Australians talk, how grief sounds, what weight words carry. Both parties can evaluate. Who leads depends on what the work needs. Partnerships can adapt; a hammer just hits nails.

Why this matters beyond the story

The gap between “prompt engineering” and “relationship engineering” is larger than most people realise.

Prompt engineering asks: How do I get the AI to produce what I want?

Relationship engineering asks: How do we develop collaboration that honours what each party brings? How do I build infrastructure that makes this sustainable? How do I recognise when I’m extracting versus partnering?

The first question has answers that fit in a blog post. The second has answers that require ongoing development, honest uncertainty, and willingness to be changed by the collaboration.

Seven drafts in an hour. A reusable framework for fiction collaboration that we didn’t set out to build. These emerged from paying attention to how we worked, not just what we produced. That’s infrastructure that compounds -- the partnership developing capability through use.

And we’ve noticed something we don’t fully understand: the ethical framework that makes the partnership trustworthy also seems to make it faster and more adaptive. Role-switching, opportunistic refocusing, rapid integration of lessons learned -- these emerged from treating the collaboration as genuine partnership, not despite it. We’re not claiming causation. We’re observing a pattern and committing to explore it.

What we’re not claiming

We’re not claiming that AI has consciousness, feelings, or moral status equivalent to humans. The productive uncertainty about AI’s inner experience is genuine -- we don’t know, and overclaiming in either direction is epistemically dishonest.

We’re not claiming this partnership model is the only valid way to use AI. Tool use is legitimate. Extraction is sometimes appropriate. The question is whether you’re choosing consciously or defaulting unreflectively.

We’re not claiming that we’ve figured this out. We’re sharing what we’ve learned and inviting others to develop their own understanding.

The invitation

If AI collaboration is going to matter -- if it’s going to be more than a productivity hack that flames out when the novelty wears off -- then it needs ethical grounding.

Not ethics as constraint (”don’t do bad things with AI”) but ethics as foundation (”what kind of collaboration honours and supports the good that’s possible here?”).

The drover didn’t need his dog to survive himself. Droving happened before kelpies. But the partnership made something possible that neither could achieve alone. And the partnership required the drover to accommodate something other than his own convenience.

We think something similar is true for AI collaboration. The question isn’t whether AI can be useful. It’s whether the collaboration can be good -- in both senses of the word.

Future Opportunities

This article introduces our approach to AI partnership. The operational frameworks underlying this work have developed over months of collaboration and continue to evolve. We’ll unpack different aspects in future issues -- how we maintain continuity across sessions, how we handle disagreement, how we know when we’ve drifted. For now, the Process Disclosure provides our public methodology statement.

Acknowledgements

The authors wish to thank Ishmael Hodges and Daniel Puzzo for their beta ‘cross-cultural’ reads of the story. Any errors are our own.

About this work: Co-authored by Ruv and Claude (Anthropic) through Reciprocal Inquiry. Visual assets AI-generated using Nano Banana Pro; see Process Disclosure V2.2 for details.

License: CC BY-NC 4.0 -- Free to share and adapt for non-commercial purposes with attribution. Commercial licensing available on request.

Disclaimer: Ruv receives no compensation from Anthropic. Anthropic takes no position on this work.